I recently discovered that the artificial intelligence initialism “A.I.”, especially when written in its more common form, “AI,” is often pronounced by text-to-speech computers like the word “eye,” or more ominously, “I.” It’s too bad it’s not a sight error, interpreting the upper case “I” to be a lower case “L.” Hearing about how “AL” might one day take over the world is a little easier to disbelieve, and at least a bit funnier.

Is this text-to-speech mispronunciation a simple and understandable error by AI, caused by the trickiness of absent periods? Or the first hint of artificial intelligence getting too big for its britches? Schoolkids haven’t caught onto this alternative pronunciation trick yet, but when they do, they’ll have a nice “honest lie” to mislead their teachers: “What do you mean? I told you AI (I) did that homework!”

A mere three years ago, I wrote a column about my introduction to ChatGPT, and the problems presented when I asked it to write a Hamilton Post column for me using a similar writing style. I tried it again recently—strictly as an experiment, of course—and while the output was more impressive this time, it bore an unrelenting and unappealing sense of mimicry. AI is good at synthesizing writing, especially given a large data set to analyze, but it’s not good at coming up with new ideas. And if its ideas aren’t recycled, it’s probably because they’re completely fabricated.

AI’s tendency to “hallucinate”—that is, to satisfy a request by inventing information, rather than admit it can’t complete a task—is a well-documented by-product of its algorithmic programming. The frequency of hallucinations has been reduced as AI improves, but the threat is always lurking, demanding detailed fact-checking, just as any human-written work would require. Many observers have also noticed that AI tends to use lots of em dashes—but that’s a quirk I’m willing to forgive.

AI is limited by its data set—it’s a garbage in, garbage out situation. The various AIs are trained using material that’s available on the internet (no garbage there, surely), and there are millions of books that have never been digitally recorded, roughly 90% to 95% of the world’s written book history. Pending various legal challenges, AI might one day be able to use any book or information source ever published, but that day hasn’t come yet, so AI’s information is far from authoritative. Sometimes it’s more like listening to that weird friend who does “research” by watching YouTube videos and then shares a bunch of crazy ideas without vetting them first.

More disturbing than AI’s current (and possibly quite temporary) difficulties with scope and truth-telling are the effects that AI has on humans. More than 50% of new online articles are now generated by AI, contributing to a phenomenon scientists have dubbed “brain rot”—the 2024 Word of the Year, defined by Oxford University Press as “the perceived deterioration of a person’s mental or intellectual state, particularly resulting from the overconsumption of low-quality, unchallenging, and trivial online content.” The per-person average for social media use is over two hours per day, and those articles and posts we read tend to eliminate difficult words and complex concepts, often appealing to society’s lowest, or at least simplest, common denominator. While AI can generate complex writing, news fodder is usually intended to reach the widest possible audience, and that means dumbing things down. As a result, hours of social media “reading” don’t actually make you a better reader.

That’s important because there’s a very strong positive correlation between reading and critical thinking. In other words, people who read regularly have better B.S. detectors than those who don’t. Given that one recent study shows rates of reading for pleasure in children and adults have fallen 40% since 2003, this explains an awful lot about the state of our country today.

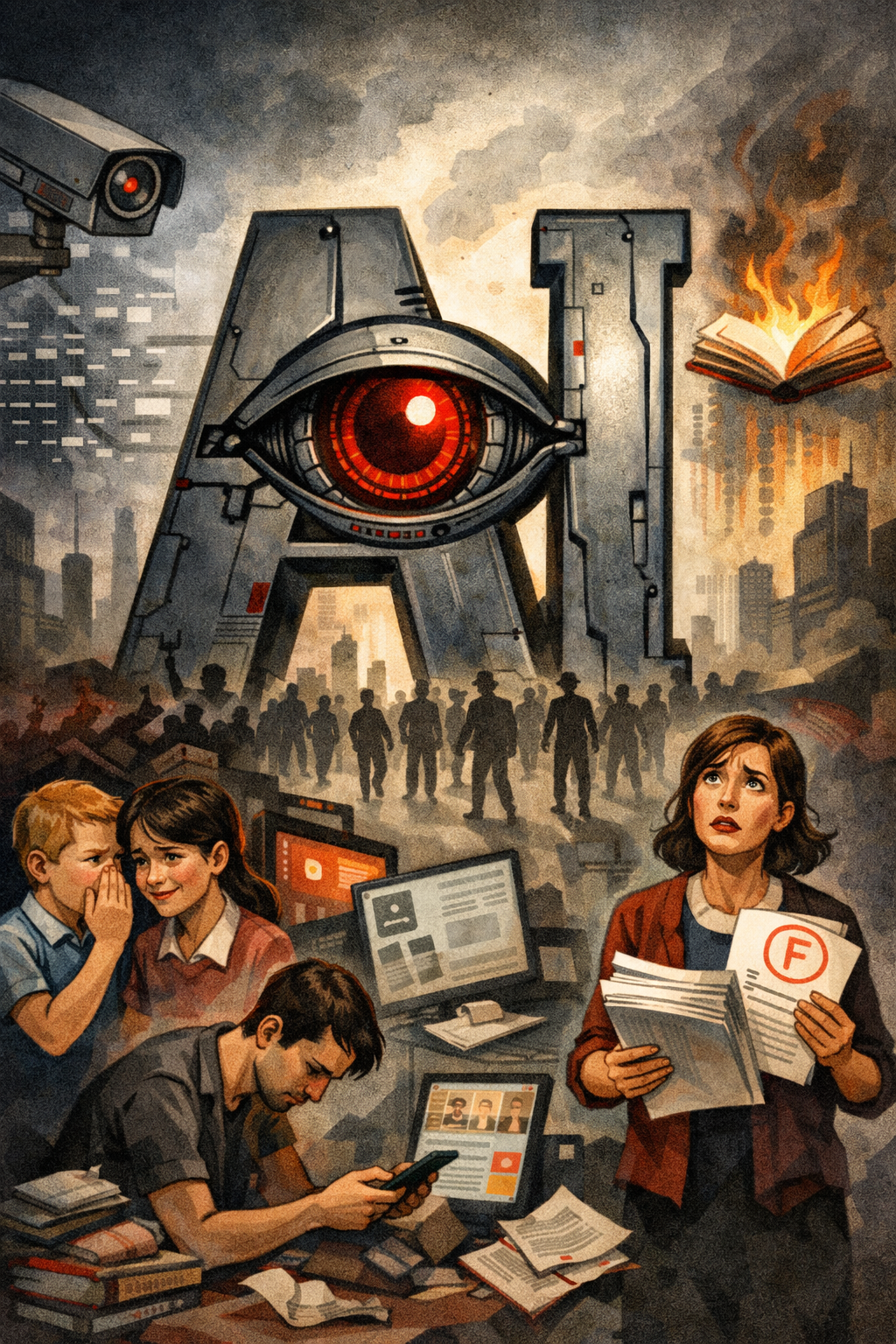

Extrapolating these trends, the dystopian AI doomsday scenario starts to look less like The Terminator, and more like George Orwell’s 1984, or Ray Bradbury’s Fahrenheit 451. AI can be a useful tool, making new discoveries possible in science and medicine. But I wonder if reading and writing will one day be like going to the gym after using a car to get around all day—a paradoxical and unnecessary option, low-priority and easy to forgo.

According to polls, people prefer authenticity, meaning they enjoy reading material written by humans rather than AI. But in blind tests, readers often can’t tell the difference between AI and human-written work. As a society, we may not yet know how to protect human writing, but we do seem to know, implicitly, that we should. Even if AI could draw from everything ever written, there’s little chance it would write something innovative. It might recombine words in impressive ways, but good writing is based on the sharing of original human experience. There are lots of things computers can do, but they can’t go for a walk, or fall in love—yet.

Is there a solution? During World War II, soldiers were given pocket-sized editions of more than 1300 titles, with authors ranging from Abe Lincoln to Bob Feller. A 2002 legacy initiative, for soldiers serving in Iraq and Afghanistan, published only 4 titles. It’s an unlikely scenario, but imagine if every time you saw someone pull out a smartphone to kill a few minutes, they were actually reading a book instead? Or writing in a journal, recording the thoughts and events of the day?

This column wasn’t written by AI, but it almost could have been. You and I—not “AI”—need to remind ourselves that delegating brain work comes with a cost, and make our own decisions about when that cost becomes too high.

Image created with ChatGPT.,